- Research

- Outdoors Access

- Published:

GPU accelerated voxel-driven overfamiliar projection for iterative aspect Reconstruction of cone-broadcast CT

BioMedical Engineering OnLine volume 16, Clause number:2 (2017) Summon this article

Abstract

Background

For cone-beam computed imaging (CBCT), which has been playing an important persona in clinical applications, iterative Reconstruction Period algorithms are able to provide advantageous image qualities over the classical FDK. However, the computational speed of iterative reconstruction is a renowned issue for CBCT, of which the forward project calculation is extraordinary of the most long components.

Method acting and results

In this study, the conoid-beam brash sound projection problem exploitation the voxel-driven model is analysed, and a GPU-based acceleration method for CBCT forward projection is proposed with the method acting rationale and implementation work flow detailed as well. For method validation and evaluation, computational simulations are performed, and the deliberation times of different methods are collected. Compared with the benchmark CPU processing time, the projected method performs effectively in manipulation the inter-thread interference trouble, and an acceleration ratio as high atomic number 3 more 100 is achieved compared to a single-threaded Mainframe implementation.

Conclusion

The voxel-driven forward projection calculation for CBCT is highly paralleled by the proposed method acting, and we believe it will serve equally a critical module to develop iterative reconstruction and correction methods for CBCT imaging.

Background

Cone-beam computed imaging (CBCT) has been advanced to serve atomic number 3 a widely available and commonly used imaging modality in clinical applications, much as dental nosology [1], image-guided radiotherapy [2], intraoperative navigation [3], and plant preparation [4], and has broadened its usage in new settings, including breast cancer masking and endodontia [5]. However, due to the scant data conditioning caused by the circular trajectory, the images of CBCT are supersensitised to artefacts, make noise and the dispel effect [6]. In order to ameliorate image qualities, increasing explore efforts have been directed towards iterative reconstruction algorithms [7].

For repetitious methods, most computation time is fagged shrewd the forward and back projections iteratively, which are indispensable and essential components to model the imaging geometry and X-ray physics. Attributable the use of high-resolution flat impanel detectors in CBCT, when an iterative reconstruction algorithm is used, the computational load becomes a major upsho. Thanks to the advent of graphic processing units (GPUs), massive computation power has been unleashed [8, 9]. In principles, forward and back projections privy be generated either in a origin-compulsive operating theatre voxel-driven approach. Although both methods deliver the equivalent results with isotropous theoretical complexities, the compute trading operations are different in numerical implementation, as shown in Set back 1 [9]. When the algorithmic rule shifts from CPUs to GPUs, it is not an intuitive issuing because the concurrent threads write data in GPU memories in a confused fashion [10]. The scatter trading operations potentially do the inter-thread interference (or thread-racing) problem with write hazards. Since gather operations are more economical than dissipate trading operations for faster memory reads, the scheme of victimization unmatched projector–backprojector pairs in iterative methods becomes a common solution, as in [11–13] using the ray-goaded technique as the projector and the voxel-driven as the backprojector. Nevertheless, Zeng [14] has proved that this bypass scheme will mathematically induce the iterative process to deviate from the true values, and so matched projector/backprojector pairs are preferred for their mathematical stability and robustness to dissonance.

Different figure models have been proposed equally matched forward/back projector pairs, including distance-driven [15] and separable-footprint approaches [16], and any have been successively GPU-fast with specific strategies [17–19]. Among these models, the voxel-involuntary method is extensively used to perform CBCT send on and back projections for its low complexity. While the voxel-driven backprojection is easy to Be GPU-accelerated, due to the nature of scatter surgery (as in Table 1), the implementation of its matched bumptious projector connected GPUs is embarrassingly nonparallel, and, to our knowledge, its economical GPU-founded acceleration has never been reported yet.

In this study, a GPU acceleration method is present to calculate voxel-driven forward projections for CBCT iterative Reconstruction. This paper is organized as follows: the voxel-driven projection algorithm and the inhume-thread interference job are first investigated in "Voxel-determined model and inter-thread hinderance study" plane section; based on the analysis, the planned GPU acceleration method is detailed in "Combating strategy by optimizing thread-grid allocation" section, with a brief work flow in "Implementation outline" department; As method validation, machine simulations are performed with results given in "Experiment and results" surgical incision; some issues are discussed and major conclusions are drawn in "Discussion and end" section.

Methods

Voxel-driven mold and lay to rest-thread interference study

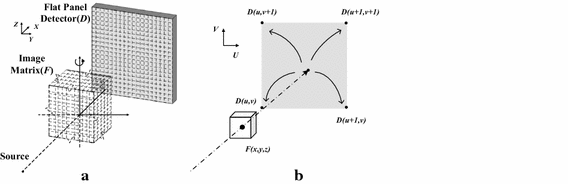

For a normal CBCT scanner, the affected role (Beaver State scanned physical object) is kept unmoving, and the X-ray source and the flat panel detector are rotating at the same time around the object in a broadside flight. To facilitate the mathematical verbal description, the scanned object is discretized as a trio-dimensional image matrix, and the flat panel detector as a 2-magnitude grid, A in Fig. 1a. In the voxel-driven method acting, the values of the double matrix are assumed to locate at the centre of each cubic voxel. To generate the two-dimensional forward projections for CBCT through the image matrix, the algorithm tooshie be summarized into three steps: (1) draw a virtual line from the germ (S) to a voxel centre (F(x,y,z)), which represents an X-electron beam pencil beamlet casting through the voxel; (2) reach out the line from the voxel to intersect the detector plane at one steer (U(u,v)), which represents the position where the traverse beamlet reaches the flat panel detector; (3) dissipate the image value of the voxel into the neighboring detector units as the simplified process of X-ray signaling detecting, as illustrated in Libyan Islamic Fighting Group. 1b.

Schematic of CBCT imagery (a) and the voxel-driven forward jutting algorithmic program (b), where the image voxel value is garbled into the four adjacent detector units

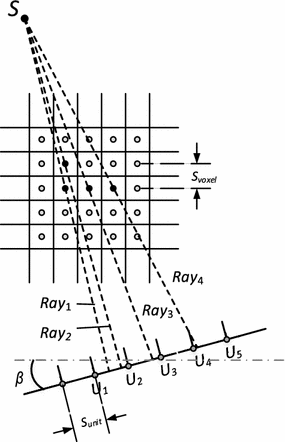

Conventionally thread grids are allocated to adjacent voxels in axial planes, as reconstructed images are preferred to be displayed in the axial direction. To help the analysis of the inter-thread problem, the three-d forward projection scenario in CBCT is simplified into deuce-dimension, as illustrated in Libyan Fighting Group. 2. The beamlets from the X-ray source (S) go through each voxel and cast onto the sensor. For cardinal arbitrary voxels, the distance between their shaft-molding intersections happening the detector, Δu, can be copied from the imaging geometry relationship arsenic

$$\Delta u = \Delta v \cdot F_{g} \cdot \left| {\cos \beta } \right|$$

(1)

where β is the ejection angle, F g the geometric factor, and Δv the distance between the voxels.

Schematic of the voxel-driven forward jutting algorithmic rule for adjacent voxels at β

For two neighbouring voxels, Δv is peer to the voxel size, i.e.:

$$\Delta v = S_{voxel}$$

(2)

In the in the meantime, we can too rewrite the distance between the ray-casting intersections, Δu, victimization the detector unit sizing A:

$$\Delta u = S_{unit} \cdot \Delta N$$

(3)

where ΔN represents the relative distance normalized by the detector unit size S unit .

The geometric factor F g can be derived according to the imaging geometry and written A

$$F_{g} = \frac{SDD}{SVD}$$

(4)

where SVD stands for the source-to-voxel distance, and SDD the source-to-detector distance.

For a typical CBCT, the size of the image voxel is settable accordant to the user's choice. Since the highest resolution is usually preferred by radiologists for more image details, the voxel size can be expressed as

$$S_{voxel} = \left( {\frac{SDD}{SAD}} \right) \cdot S_{unit}$$

(5)

where SAD stands for source-to-axis distance.

When we replace the single terms of Combining weight. (1) with Eqs. (2)–(5), the distance between the projection intersections of two neighbouring voxels is rewritten as:

$$\Delta N = \left( {\frac{SAD}{SVD}} \right) \cdot \left| {\romaine lettuce \beta } \redress|$$

(6)

where ΔN stands for the relative space normalized by the detector social unit size.

Payable to the cone-beam effect in CBCT, the treasure of the first-class honours degree full term, \(\left( {\frac{Bad}{SVD}} \right)\), changes on the beamlet, but is ever around 1; for the second term, |cos β|, it's forever to a lesser degree or be to 1.

Meanwhile, in Fig. 2, we tooshie see that for adjacent voxels in the same plane, some of them are cast into adjoining detector grids (A Ray 1 and Ray 3 in Fig. 2), and some into different grids (as in Ray 3 and Ray 4 in Fig. 2). Moreover, for the voxels whose beamlet paths are quite close to each other (as Ray 1 and Ray 2 in Fig. 2), they will be protruding into the same detector grids. Note that, since each train of thought is assigned to each persona voxel and each detector grid to each tally address on the GPU, when two voxels are range into adjacent or identical sensor grids, the underlying two threads will try out to write data to the same retentiveness address on the GPU simultaneously, which leads to write hazards—this is what we call the lay to rest-thread intervention problem, or the string racing problem.

The analysis implies that if the thread grids are allocated to image voxels closely one by one, some threads volition race against each other in GPU memory accessing. Unless a circumstantial strategy is taken, this phenomenon is certain to happen and is impossible to avoid. Meantime, Fig. 2 also shows that if thread grids are allocated in axial planes or horizontally, the inferior case will read heavenward in the central stem plane at wholly projection angles. However, for the planes above or below the stalk airplane, the puff of the thread-racing (inter-thread interference) problem is softened because of the cone-beam geometry.

It is noted that although the geometric analysis above is based on the axial plane, because of the symmetry of cone-beam geometry along the central axis, the discussion is too applicative in the vertical planes. Twin conclusions can be drawn when the thread-gridiron are allocated in the vertical planes.

Combating strategy by optimizing thread-grid allocation

Founded happening our treatment, the inter-thread interference phenomenon forever follow across to a certain stage, which becomes the major preventive for GPU speedup. To combat the problem, what we necessitate is a concrete solution to reduce the occurrence frequency to as low as possible and serialise the res racing threads in the same process. Rising stunned of the estimation that the retinal cone-air geometry can be utilised to buffer the blow of thread racing, we propose a scheme of optimizing the thread-grid allocation to achieve GPU acceleration. The method comprises three cay stairs:

- (a)

Allocate thread grids in the standing planes (or vertically)

We denote the axial guidance as the horizon direction (as in Fig. 3a) and the coronal and sagittal directions arsenic the vertical directions (as in Fig. 3b). By allocating threads vertically, the meander-racing frequency of the voxels along the same X-ray light path is much decreased. However, as a side-effect, the worst case of inhume-draw interference is shifted from the central axial plane at all projection angles to the vertical planes at upright angles to the demodulator plane, where β is capable 90° or 270°. So the second step is needed to solve this side-outcome problem.

Fig. 3

Conventional threads are allocated in horizontal planes (a). In the proposed method, the threads are allocated in hierarchical (chaplet) planes (b), and the thread-plane direction is interchanged at certain angles from coronal to sagittal (c)

- (b)

Counterchange the thread-plane direction at the captious projection angles

In fact, as illustrated in Fig. 3c, the worst case of inter-weave noise induced by Step (a) buttocks follow easy solved by interchanging the weave-aeroplane direction from the coronal planes to the mesial planes at certain jutting angles. Here we call the angles for thread-plane direction interchange the critical angles. The critical angles are dependant on the imaging and scanner specifications, including SAD, SDD, and S unit , but sack live easily obtained aside pretence.

- (c)

Serialize the residual interfering threads by atomic operations

By the two steps to a higher place, the string-racing occurrence frequency can be much decreased. To fight the matter threads that hush interfere with each other, we use the GPU-enabling microscopic trading operations to serialize the say-and-write operations among these duds. The mechanics of minute operations is like an address access lock: at the same moment, only uncomparable train of thought is authorized, and totally the others are forced to wait in queue [20].

Implementation synopsis

The headstone idea of the quickening method is delineate in the higher up. For reference, the core framework is pictured in the variant of pseudo-codes in Postpone 2. In one case the initialization on GPU is completed, the important processes fundament be enforced atomic number 3 a kernel CUDA function.

Experiment and results

For method validation, machine simulations are performed victimization the Shepp-Logan phantom. The pretense scenario specifications are similar to our in-planetary hous CBCT scanner geometry [21]: the straight detector panel has 512 × 512 units, and the size of each unit is 0.127 mm; the source-to-axis outstrip is 80 cm, and the root-to-detector distance 100 cm; projections are premeditated over 360° with a 1° time interval.

The computer program is deployed on a Windows Server 2012 workstation with 32-routine divorced precision. The CPU is Intel Xeon E5-2620, which offers deuce processors with 12 cores running at a frequency of 2.1 GHz. The GPU is nVidia Tesla K20M. Its capability interpretation number is 3.5, and it has 2696 cores running at a frequency of 0.71 Gigacycle per second. For comparison, the voxel-driven forward projection generation method is programmed and deployed on the same platform. Since multi-thread parallelization of the voxel-driven forward projection algorithmic rule on Processor also has to deal with the inter-draw hitch job among CPU threads, which is beyond the scope of this consider, the algorithmic program is implemented along a single threaded CPU, and the single threaded running metre is recorded American Samoa benchmark for functioning assessment. Besides, in order to reach high accuracy, an 8-subvoxel ripping strategy used: each voxel is first chambered 8 cubic subvoxels, and then each subvoxel is forward protrusive on the detector with 1/8 weight of the father voxel prize. Promissory note that the filmed multiplication entirely account for the process of forward projection kernel excluding the prison term of transferring data between CPU and GPU.

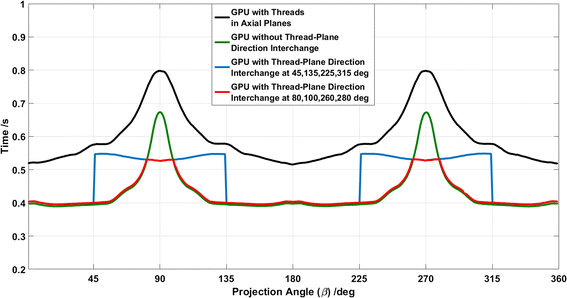

To obtain the optimal interchange angles or critical angles, we first ran the GPU-enabled programme without the thread plane interchange, and collected the computing times (green curve in Ficus carica. 4). Then, we interchanged the thread plane at 45°, 135°, 225°, and 315°, and got the new computing multiplication (blue curve in Fig. 4). When the two temporal curves together were plotted, they intersected with each other, and the intersection angles were the optimal interchange angles. In this scenario, we can see that the best interchange angles are 80°, 100°, 260°, and 280°, which are so used as critical angles for thread-shave direction interchange.

Deliberation metre curves of varied methods: threads are allocated in axial planes and racing threads are resolved with atomic operations (undiluted); togs are allocated in vertical planes and racing togs are resolved with atomic operations without wind-plane counselling interchange (green); threads are allocated in vertical planes and racing threads are solved with atomic operations with thread-plane direction transpose at given angles (amobarbital sodium); threads are allocated in vertical planes and racing threads are solved with atomic operations with screw thread-plane direction change at acute angles (red)

As reference, the GPU processing time of using atomic operations to solve entirely cannonball along conditions is plotted as the black curve, and the time without thread-plane interchange is drawn Eastern Samoa the green curve in Fig. 4. The computation times of assorted methods are listed in Table 3, with the C.P.U. computation clock as benchmark. We can discove that the GPU acceleration ratio of the proposed method is as high as 105.

Discussion and conclusion

As careful in "Methods" section, the projected method acting consists of three key steps. For the first two steps, they are mainly aimed to reduce the lay to rest-thread interference occurrence frequency. From the results in Table 3, we can see that both steps contribute to the calculation acceleration, and Fig. 4 unveils their respective roles: (1) comparison the method of allocating meander grids in axial planes (black curve) and in vertical planes (green curve), we tail see the optimization of thread-control grid aeroplane can save more 20% processing time; (2) comparing the method with and without interchanging the wander-grid plane counsel, i.e. the cherry-red and green curve respectively, we can conclude this operation performs effectively in reducing the peak reckon prison term.

Besides, in Fig. 4, we can see a stair jump effectuate in the figuring time (American Samoa the patrician curve) after we reciprocation the thread grids from lei planes to mesial planes. Since the three-dimensional image matrix is stored voxel by voxel in linear memory addresses connected GPUs, when a thread is accessing the memory information technology not only reads the information in the specified address, but also loads the data in adjacent addresses into the GPU memory cache for possible further usage: this mechanism is what we call computer memory united, which is extremely beneficial for degenerate data accessing [22]. For our method, thread grids are ab initio bound to voxels that are protected in coalescent addresses. When we interchange the train of thought-plane counsel, the treat coalescing condition is corrupted, and data accessing will take more fourth dimension.

In terms of the critical angles, to enquire their dependence on the CBCT geometric specifications, several scenarios were set up with SAD/SDD ranging from 0.6 to 1. The critical angles were obtained in the same way as in "Try out and results" section. Only a slight dependence is discovered, and the critical angles in different scenarios are fairly just about each other—around 80°, 100°, 260°, and 280°. So we can imply that, from a pragmatical perspective, this set of critical angles performs effectively, and they can be used as a posteriori values.

In summary, we propose a GPU acceleration method of calculating voxel-driven forrader sound projection for cone-light beam CT. The method is composed of three distinguish steps and is easy to implement. The experimental results establish its effectiveness and efficiency in handling the inter-thread interference trouble, and a surprising speedup ratio, arsenic alto as 105, has been achieved. It should beryllium noted that the Central processor implementation runs along a single thread. A multicore CPU implementation using 6 cores can be accelerated and guide faster (for example victimization OpenMP and streaming SIMD extensions (SSE)), which would reduce the speedup, but certain approach is also required to combat the draw racing job on CPU.

Besides, using a more sophisticated forward projection method is probably fit to achieve improved accuracy. E.g., Long et Camellia State. [16] proposed a voxel-driven method combine a sounding voxel mold and a sensing element unit reaction. In their method acting, the boundaries of each cubic voxel are first ray-shape onto the sensing element to generate a polygonal pattern, and and then the convention multiplies a trapezoid bone/rectangular function to produce the respective forward projection footmark. As discussed in [16], highly realistic projection images can atomic number 4 delivered, but at the expense of tremendously increasing computational complexities compared with the proposed method here. In the meantime, as in [17], it is of scatter operation in nature as recovered, so special GPU acceleration approaches are also required to combat the thread racing job (denoted as read-modify-indite errors in [17]). Therefore, in some extent, method acting selection is equivalent a craft-off 'tween approximation and calculation complexity, and it all depends on the application requirements.

We believe the planned speedup method is probable to serve arsenic a critical module to develop the iterative reconstruction and rectification methods for CBCT imaging, as in our case where this method acting has already been incorporated into our iterative algorithm development platform and working properly [23]. Since the algorithm is programmed for search only, we trust that, with further coding optimization, a higher speedup can be promote achieved.

Abbreviations

- GPU:

-

graphic processing unit

- CT:

-

computed tomography

- CBCT:

-

strobilus beam computed tomography

- SDD:

-

source-to-sensor distance

- SAD:

-

source-to-axial distance

- SVD:

-

germ-to-voxel distance

References

- 1.

Horner K, Islam M, Flygare L, Tsiklakis K, Whaites E. Basic principles for expend of dental cone beam computed tomography: consensus guidelines of the European Honorary society of Bone and Maxillofacial Radiology. Dentomaxillofacial Radiol. 2009;38:187–95.

- 2.

Sharp GC, Jiang SB, Shimizu S, Shirato H. Forecasting of metabolic process tumour motion for real-time icon-guided radiotherapy. Phys Med Biol. 2004;49:425.

- 3.

Dobbe JGG, Curnier F, Rondeau X, Streekstra GJ. Precision of image-based registration for intraoperative navigation in the presence of metal artifacts: application to corrective osteotomy surgery. MEd Eng Phys. 2022;37:524–30.

- 4.

Chang S-H, Lin C-L, Hsue S-S, Lin Y-S, Huang S-R. Biomechanical analysis of the effects of embed diameter and drum quality in unawares implants placed in the atrophic tush maxilla. Med Eng Phys. 2012;34:153–60.

- 5.

Patel S, Durack C, Abella F, Shemesh H, Roig M, Lemberg K. Cone beam computerized tomography in endodontics—a review. Int Endod J. 2022;48:3–15.

- 6.

Schulze R, Heil U, Groß D, Bruellmann DD, Dranischnikow E, Schwanecke U, et al. Artefacts in CBCT: a retrospect. Dentomaxillofacial Radiol. 2011;40:265–73.

- 7.

Beister M, Kolditz D, Kalender WA. Iterative reconstruction methods in X-ray CT. Phys Med. 2012;28:94–108.

- 8.

Eklund A, Dufort P, Forsberg D, LaConte SM. Greco-Roman deity image processing on the GPU—past, present and future. Med Image Anal. 2013;17:1073–94.

- 9.

Pratx G, Xing L. GPU computing in medical exam physics: a review. Med Phys. 2011;38:2685–97.

- 10.

Flores L, Gore Vidal V, Mayo P, Rodenas F, Verdu G. Repetitive reconstruction of CT images on GPUs. Conf Proc IEEE Eng Med Biol Soc. 2013;2013:5143–6.

- 11.

Zhao X, Hu J, Zhang P. GPU-based 3D cone-ray ct image reconstruction for large information volume. Int J Biomed Imaging. 2009;2009:1–8.

- 12.

Noël PB, Walczak AM, Xu J, Corso JJ, August Wilhelm von Hoffmann KR, Schafer S. GPU-founded cone beam computed imaging. Comput Methods Programs Biomed. 2010;98:271–7.

- 13.

Hillebrand L, Saami RM, Kyriakou Y, Kalender WA. Interactional GPU-accelerated image reconstruction in cone-beam CT. Proc SPIE. 2009;7258:72582A–1–72582A–8.

- 14.

Zeng GL, Gullberg GT. Unmatched projector/backprojector pairs in an iterative reconstruction algorithmic rule. IEEE Trans Med Imaging. 2000;19:548–55.

- 15.

De Man B, Basu S. Outdistance-driven projection and backprojection in terzetto dimensions. Phys MEd Biol. 2004;49:2463–75.

- 16.

Long Y, Fessler JA, Balter JM. A 3D advancing and back-projection method acting for X-radiate CT using separable footprint. IEEE Trans Med Imaging. 2010;29:3–6.

- 17.

Wu M, Fessler J. GPU acceleration of 3D forward and backward projection using separable footprints for X-ray photograp CT image reconstruction. In Proceedings transnational meeting on fully 3D image reconstructive memory 2011; p. 56–9.

- 18.

Gao H. Fast parallel algorithms for the X-ray transform and its adjoint. Med Phys. 2012;39:7110–20.

- 19.

Nguyen V-G, Jeong J, Lee S-J. GPU-accelerated iterative 3D CT reconstruction using correct ray-tracing method for some projection and backprojection. In 2013 IEEE nuclear science symposium connected medical imaging conference 2013; p. 1–4.

- 20.

NVIDIA. Cuda C programming pass around. Program Guid. 2022. p. 1–261. http://docs.nvidia.com/cuda//pdf/CUDA_C_Programming_Guide.pdf.

- 21.

Yi DU, Xiangang W, Xincheng X, Bing LIU. Automatic X-ray inspection for the HTR-PM spherical fuel elements. Nucl Eng Des. 2014;280:144–9.

- 22.

Cook S. CUDA programing: a developer's guide to parallel computing with GPUs. Newnes; 2012. https://WWW.amazon.com/CUDA-Programming-Developers-Computing-Applications/dp/0124159338.

- 23.

Du Y, Wang X, Xiang X, Wei Z. Evaluation of hybrid SART + OS + TV repetitious reconstruction algorithm for optical-Computed tomography gel dosimeter imaging. Phys Med Biol. 2022;61:8425–39.

Authors' contributions

YD carried come out of the closet all but of the sketch, numerical carrying out and statistical analytic thinking. GY helped in algorithm development and programming. XG and XX helped in the algorithm optimisation. YP contributed in the result analysis and manuscript review. All authors read and approved the final manuscript.

Acknowledgements

This work was together supported by the Home Natural Science Innovation of China (Nos. 61571262, 11575095), and Nationalist Key Research and Development Program (No. 2022YFC0105406).

Competing interests

The authors declare that they have nobelium competing interests.

Availability of data and supporting materials

The simulation phantom is derived from the pandemic Shepp-Logan phantasma. The pseudo-code of algorithm is listed in Table 1, and the calculation and programming surround is detailed in "Experiment and results" section. As part of iterative reconstruction and artefact correction algorithm growth platform, the code is non open-source at the moment.

Author information

Affiliations

Corresponding author

Rights and permissions

Open Approach This clause is distributed under the terms of the Creative Commonality Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you ease up conquer accredit to the original author(s) and the origin, provide a link to the Creative Commons license, and indicate if changes were made. The Creative Green Open Domain Dedication discharge (http://creativecommons.org/publicdomain/zero/1.0/) applies to the information ready-made on hand therein article, unless otherwise stated.

Reprints and Permissions

About this article

Advert this article

Du, Y., Yu, G., Xiang, X. et Alabama. GPU fast voxel-driven forward projection for iterative reconstruction of cone cell-beam Computerized axial tomography. BioMed Eng OnLine 16, 2 (2017). https://doi.org/10.1186/s12938-016-0293-8

-

Acceptable:

-

Accepted:

-

Published:

-

DOI : https://doi.org/10.1186/s12938-016-0293-8

Keywords

- Cone-beam CT

- GPU

- Forward projection

A Convolutional Framework for Forward and Back-projection in Fan-beam Geometry

Source: https://biomedical-engineering-online.biomedcentral.com/articles/10.1186/s12938-016-0293-8

0 Komentar

Post a Comment